RE: “What does CUDA team do (now)?”

“We just solve issues we encounter trying to use Nix for our purposes” is not an unfair assessment, but it’s uninformative. Most of the issues we encounter cannot be solved in any satisfactory manner by just rearranging something inside the Nixpkgs’ cudaPackages scope. On the contrary, the problems usually show at the interfaces with other ecosystems and other projects. As mentioned during the chat, we’ve got a public project board at CUDA Team · GitHub. It’s got a “roadmap” column that we update occasionally. Filtering out the noise, the big items on it have to do with “how do we finance”, “how do we link impure dependencies in general, and especially in the FHS environments”, “how do we address nvcc’s toolchain requirements and stay compatible with the rest of Nixpkgs”, etc. Nobody in the CUDA team is much of an expert in cross-compilation or in the Nixpkgs’ setup hooks system, but these are the subsystems we have to interact with. Coming from another direction, it has never been the Nixpkgs’ first priority to deal with unfree and impure dependencies. So a large part of what we do is we ask questions, and we ask if we may adjust the interfaces.

So here are just a few stones we keep stumbling on.

aarch64-linux

As mentioned above, we’ve just merged the aarch64-linux support for cudaPackages, the main target of which are the Jetson singleboard devices for edge computing: cudaPackages: support multiple platforms by ConnorBaker · Pull Request #256324 · NixOS/nixpkgs · GitHub. This change has been in preparations literally for months and required a substantial rewrite of cudaPackages. Now that it’s been merged it’s a matter of adjusting the downstream packages that might still rely on older features that we can only support for x86_64-linux and only for so long. One such “feature” is the legacy runfile-based cudaPackages.cudatoolkit, which still used e.g. by python3Packages.tensorflow: CUDA-Team: migrate from `cudatoolkit` to `cudaPackages` · Issue #232501 · NixOS/nixpkgs · GitHub

cuda_compat

As mentioned above, we want to use the cuda_compat driver whenever legally available (unfortunately, that doesn’t include consumer-grade GPUs) because of the relaxed compatibility constraints and because we can probably link it in the applications directly. However, we don’t really know how to use it “correctly”, since it’s got impure dependencies and their compatibility constraints are undocumented. All we can do is test a number of options, and see for ourselves what works and what doesn’t:

- cudaPackages: improve the handling of cuda_compat · Issue #273797 · NixOS/nixpkgs · GitHub,

- CUDA on NVidia Jetsons · Issue #11 · numtide/nix-gl-host · GitHub,

- Prepare for cuda_compat usage: make libnvrm* available in /run/opengl-driver by yannham · Pull Request #160 · anduril/jetpack-nixos · GitHub.

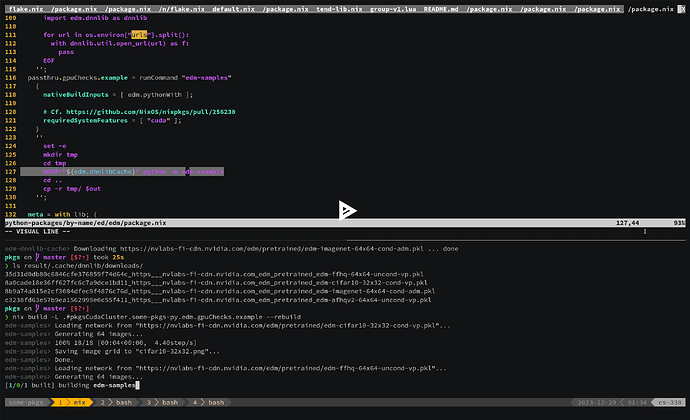

Using GPUs during the nix-build

We’ve run our edm toy directly on a local machine, on the cluster using a singularity container, and on an embedded device. There’s one more target we left out from our presentation: the Nix sandbox itself. The idea is that we can conditionally expose the GPU devices in the sandbox when a derivation declares those are required. Moreover, we can inform the Nix that the derivation can’t be built just on any host but only one appropriately configured, by including a special marker in the derivation’s requiredSystemFeatures list. In our case we called the marker "cuda", and we configured the builder to declare it in its system-features (man nix.conf), and to mount the device files into the sandbox using this experimental PR: GPU access in the sandbox by SomeoneSerge · Pull Request #256230 · NixOS/nixpkgs · GitHub. As noted in the comments inside the PR, there potentially are alternative approaches, as well as ways to expand on this work and do more cursed magic.

(View the recording at nix build github:SomeoneSerge/pkgs#pkgsCuda.some-pkgs-py.edm.gpuChecks.example - asciinema.org)

A real-time version, with all the mistakes and typos:

https://asciinema.org/a/YiNNMCvp9oEkQbar8hSeTqayu

Dynamic linker’s search paths and their priorities

As mentioned above, sometimes we can’t link libraries directly. This is currently true for some of the libraries associated with OpenGL, this is true for libcuda. Some programs have valid reasons to use dlopen and to expect the user to provision the dependencies at runtime. E.g. these dependencies could be some kind of plug-ins. This is why it’s important that we have a way to communicate with the dynamic linker (e.g. ld-linux.so) and adjust its behaviour at runtime. This is, for example, what the variables LD_LIBRARY_PATH and LD_PRELOAD are: they override at runtime the behaviour we fix in Nixpkgs. Nixpkgs allows that, and it relies on that (otherwise we could run any Nixpkgs’ graphical applications on an Ubuntu), and in that sense they are part of Nixpkgs’ interface, and part of its contract. In fact, Nixpkgs even chooses which dynamic loader to ship with the program and which patches to apply.

It’s a known issue with Nixpkgs, that the user cannot just set LD_LIBRARY_PATH=/usr/lib: this would not just help the dynamic linker find the missing dependencies, it’d also make it discard the other “correct” dependencies we had linked directly. Normally this would result in a runtime error, because of the compatibility constraints of some common libraries, notably the libc. This is part of the reason wrappers such as numtide/nix-gl-host exist.

This priority issue, however, doesn’t concern just CUDA and OpenGL. For example, the LD_LIBRARY_PATH variable is sometimes abused by other systems, which makes Nixpkgs software fail “by default”. Cf. e.g. Nix Package Manager on Arch Linux: "`GLIBC_2.38' not found" Error · Issue #287764 · NixOS/nixpkgs · GitHub, and, more generally, Any way for LD_LIBRARY_PATH to *not* break nix-env's absolute paths for dynamic libs · Issue #902 · NixOS/nix · GitHub.

Properly addressing all of these issues would likely require an upstream change in glibc, rather than just in Nixpkgs. For instance, our use-cases might benefit both from a lower-priority version of LD_LIBRARY_PATH, and from a higher priority version of DT_RUNPATH (e.g. the deprecated DT_RPATH):

- glibc: implement `LD_FALLBACK_PATH` environment variable by oxij · Pull Request #248547 · NixOS/nixpkgs · GitHub

- glibc: Add a NIX_LD_SO_CACHE environment variable to glibc by Atry · Pull Request #248777 · NixOS/nixpkgs · GitHub

These efforts naturally overlap e.g. with draft: per-dso .so resolution cache on glibc by pennae · Pull Request #207893 · NixOS/nixpkgs · GitHub.

The UX of Nixpkgs’ CUDA in FHS distributions like Ubuntu depends on the resolution of these larger-scale questions involving multiple parties and external projects. Thus the problem for us is just to navigate, and to decide when do we care enough to push the matter on our side.

Licenses, policies, and exceptions

The cudaPackages are “unfree”. Specifically, their meta.licenses lists contain a record marked with unfree = true. Additionally, their meta.sourceProvenance is, almost always, set to binaryNativeCode. These meta data are there to signal that these packages provide fundamentally weaker guarantees about reliability, repeatability, reproducibility, and security, compared to the rest of Nixpkgs. This metadata tells Hydra and Ofborg not to cache and not build them. In a very implicit way, this metadata tells the Nix’s command line tooling to reject building and even evaluating these packages without the user’s express consent.

The real interface, at the time of writing, is largely implemented in Nixpkgs: the permissions (policies) are expressed as arguments to the nixpkgs instantiation, e.g. the allowUnfree in import nixpkgs { config.allowUnfree = true; }; the enforcement is implemented using exceptions; normally, the exceptions aren’t thrown from the package expressions, but are deferred using the meta attributes (meta.broken, meta.knownVulnerabilities, meta.licenses, etc); a package disallowed by the user’s policy cannot be evaluated. Concretely, we couldn’t compute cudaPackages.cuda_nvcc.outPath without accepting the license at the time of instantiating Nixpkgs, and we couldn’t even realize the corresponding .drv file:

❯ nix eval nixpkgs#cudaPackages.cuda_nvcc.outPath

...

error: Package ‘cuda_nvcc-11.8.89’ in /nix/store/4fgs7yzsy2dqnjw8j42qlp9i1vgarzy0-source/pkgs/development/cuda-modules/generic-builders/manifest.nix:230 has an unfree license (‘CUDA EULA’), refusing to evaluate.

...

❯ nix path-info --derivation nixpkgs#cudaPackages.cuda_nvcc

...

error: Package ‘cuda_nvcc-11.8.89’ in /nix/store/4fgs7yzsy2dqnjw8j42qlp9i1vgarzy0-source/pkgs/development/cuda-modules/generic-builders/manifest.nix:230 has an unfree license (‘CUDA EULA’), refusing to evaluate.

...

Since we couldn’t compute neither the drv name nor the output path, we couldn’t possibly build or substitute this dependency: we’re “safe” from accidentally running the potentially malicious program. Nonetheless, this interface or this mechanism is not without limitations.

The nixpkgs’ config parameter at the Nix language level maps into --arg in the Nix CLI tools, but only when Nixpkgs itself is the entrypoint. E.g. nix-build '<nixpkgs>' --arg 'config' { allowUnfree = true; }' -A ... is always valid, but the support for nix-build default.nix --arg 'config' '{ allowUnfree = true; }' -A ... in a user’s project would have to be implemented ad hoc: the user would have to write the logic to pass the config from their default.nix to Nixpkgs. This complicates enforcing policies such as: “no project on this host is allowed use unfree or insecure dependencies”.

The experimental Nix “Flakes” do not currently help with this issue. In a way, they make it more severe: now that it’s easier to propagate deeply nested transitive dependencies into one’s project, it’s harder to verify if these dependencies respect a policy, since each dependency could simply instantiate and configure its own copy of Nixpkgs. In fact, in the pure evaluation mode, that is the only way to use unfree dependencies with Flakes. This is further complicated by the fact that Flakes are relatively agnostic of the Nix language and of the Nixpkgs-level concepts: the Flake outputs, specifically packages and legacyPackages, correspond do the .drvs and to the store paths, rather than to “the thing that goes into the callPackage”, or “the thing with an override method, i.e. the thing returned by callPackage”. While abstractions may leak, and the interfaces may be blurry, one can see e.g. that Flakes do not yet support neither --arg nor --apply.

Preventing evaluation isn’t necessarily the behaviour we really want either. One could argue, albeit it’s a stretched example, that this isn’t sufficiently safe because the .drv files and output paths are oblivious of their licenses and vulnerabilities, and so if we had obtained a .drv by other means than evaluation, we could build it, and we could substitute it. Maybe more importantly, the interrupted evaluation means that we cannot assign a name to the package we’re prohibiting, at least not the way we name derivations and outputs. This sometimes results in very odd artifacts. For example, the Nix Bundlers project comes with a transformation called toReport, which takes a flake output, and produces a report about the licenses of its dependencies. The irony is that in order to generate a report about a project that depends on unfree dependencies, the user would first have to “accept” their unfree licenses:

❯ nix bundle --bundler github:NixOS/bundlers#toReport nixpkgs#cudaPackages.cuda_nvcc

...

error: Package ‘cuda_nvcc-11.8.89’ in /nix/store/dwikaw0zm70m2jlv1ngjz2xf9j213jqm-source/pkgs/development/compilers/cudatoolkit/redist/build-cuda-redist-package.nix:167 has an unfree license (‘unfree’), refusing to evaluate.

...

❯ NIXPKGS_ALLOW_UNFREE=1 nix bundle --bundler github:NixOS/bundlers#toReport --impure nixpkgs#cudaPackages.cuda_nvcc

In the same vein, if nixos-rebuild stumbles on an insecure package after updating a flake or a channel, the only way to inspect the dependency tree and find its reverse dependencies is to first relax the policy and allow insecure packages:

❯ nixos-rebuild switch --use-remote-sudo -L

...

error: Package ‘electron-22.3.27’ in /nix/store/wr9zl1fj8ypwzlvybxjxzwb3ama4y9sw-nixpkgs-patched/pkgs/development/tools/electron/binary/generic.nix:35 is marked as insecure, refusing to evaluate

...

❯ # Let's find out who's using electron:

❯ nix-tree --derivation .#nixosConfigurations.$(hostname).config.system.build.toplevel

error: cached failure of attribute 'nixosConfigurations.cs-338.config.system.build.toplevel'

nix-tree: Received ExitFailure 1 when running

Raw command: nix path-info --json --extra-experimental-features "nix-command flakes" .#nixosConfigurations.cs-338.config.system.build.toplevel

❯ NIXPKGS_ALLOW_INSECURE=1 nix-tree --impure --derivation .#nixosConfigurations.$(hostname).config.system.build.toplevel

...

Scheduling, remote builders, and benchmarks

We build and cache packages with CUDA support. We know when they break, in terms of commit ranges. We keep the history of the builds. We could infer how a change in Nixpkgs may have affected the results, e.g. in terms of build times, or in terms of dependency graphs. We’ve mostly lost the information about how the closure sizes have been evolving because of the garbage collection, but it’s not hard to start collecting this data, and we could rerun the builds for the chosen historical nixpkgs revisions too. What we don’t know is how our changes have been affecting the “performance”. To infer this, we need to (design and) start running benchmarks.

Our CI currently is “Nix-based”, and likely will remain so. Specifically we use the Hercules CI, which allows us to describe the jobs declaratively as Nix language expressions, and with the help of the so-called “Module System” that many are familiar with through NixOS. The “jobs” primarily consists of normal derivations, and a job is “successful” when all its constituent derivations are successfully built. This is a pretty convenient (familiar) abstraction. The constraint that it comes with is that the builds have to run inside the Nix sandbox, and they also have to be scheduled by Nix.

We have already seen that the interfaces can be bent enough that we could, for example, access the GPUs from inside the sandbox. That is sufficient for the runtime checks, like verifying that torch.cuda.is_available() returns True with an appropriate build of torch and on an appropriately configured host.

Benchmarking would require an extra feature from Nix’s “distributed builds” system: if we modeled a benchmark as a derivation, we’d need a way to tell Nix to dedicate an entire builder for the derivation’s exclusive use. In @RaitoBezarius’ words, we’d need to implement “negative affinity” in Nix.

RE: “Profile-guided optimization”

Claims of the form “we can’t do X, because it’s impure” are often a lie and might not even serve their purpose. What might be implied is that “we could do X at the cost of sacrificing certain benefits, but it really is the wrong thing to do”. In the discussion with @fricklerhandwerk I was careless to claim that “we’d be reluctant to enable profile-guided optimization, because it’s bad for determinism” and I regret saying that. First, because it wasn’t for me to say that. Secondly, because modeling builds that rely on profile-guided optimization is a perfectly valid task for the derivation model.

Nix and Nixpkgs do allow various impurities. For one thing, every derivation’s build in Nixpkgs currently does depend on the builder’s process scheduling, and on its load at the time of the build. This is the reason why we take the care to ensure profile-guided optimization is disabled in the first place. In the larger scheme of things, we could even think of Nix and Nixpkgs as of impurity management machines. It’s not that Nix magically makes everything pure and reproducible, but that it provides a tool to systemically declare “everything that we imagine matters for executing the recipe” in a .drv, and, at build time, to isolate from everything we hadn’t declared if only possible. Isolating from the scheduler, for example, is likely expensive and hasn’t been implemented yet.

From this perspective, there isn’t a fundamental reason we couldn’t support the users’ efforts to enable profile-guided optimization (etc) in their own CIs and binary caches. If we knew we were going to run a program on e.g. a bunch of Intel Xeons, and we wanted to optimize our software for the target machines, we could include all of this information in the .drv, including special markers in the requiredSystemFeatures, that would tell Nix that this special derivation is only to be built on a host with a Xeon CPU (just as we asked for "cuda"), and only with the exclusive access (just as with benchmarks). Whether these particular constraints are sufficient to secure a reasonable level of repeatibility is really a decision for the user to make. Nix is only a modeling tool.

Here I stop before I generate more non-sense. If by accident any of the above scribbles had traces of a meaning, I should owe this to the conversations with smart and talented people from the OceanSprint and NixCon, and from the NixOS matrix channels: @RaitoBezarius, @tomberek, @flokli, Valentin, edef, @DavHau, @zimbatm, @hexa, @samuela, @ConnorBaker, @matthewcroughan, @kmittman, Kiskae, (got to cut the list somewhere). We obviously rely a lot on Nixpkgs’ python team. I am particularly impressed that certain people (samuela, zeuner, GaetanLepage to name just a few) keep unbreaking and updating jaxlib and tensorflow, in spite of all of the Bazel woes. As far I can tell I the reason I can use torch with ROCm is that @Madouura and @Flakebi went out of their way to bootstrap the support. Thanks all. Cheers